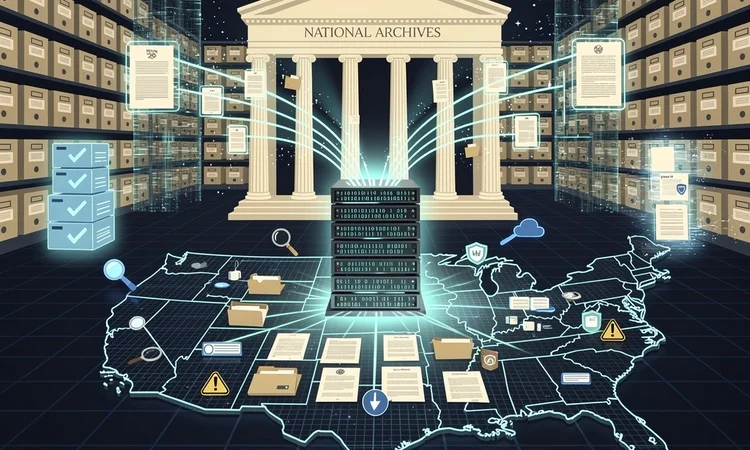

The National Archives and Records Administration is embarking on one of the most ambitious technological transformations in its 86-year history, deploying artificial intelligence to catalog and make accessible millions of historical documents that have remained largely hidden from public view. This initiative represents a fundamental shift in how America’s documentary heritage will be preserved and accessed for generations to come.

According to TechRepublic , the National Archives is implementing AI-powered tools to process its vast collection of approximately 13.5 billion pages of textual records, 40 million photographs, and countless other historical artifacts. The scale of this undertaking cannot be overstated: with current staffing levels and traditional cataloging methods, it would take centuries to properly index and make searchable the Archives’ complete holdings.

The AI system being deployed focuses primarily on optical character recognition (OCR) and natural language processing to transform handwritten and typed historical documents into searchable digital text. This technology will enable researchers, historians, and the general public to locate specific documents, names, and events across the Archives’ massive collection with unprecedented speed and accuracy. The implications extend far beyond simple searchability—this represents a democratization of historical knowledge that was previously accessible only to those with the time and resources to physically visit the Archives or navigate its limited digital catalogs.

The Technical Infrastructure Behind Historical Discovery

The National Archives’ AI implementation relies on sophisticated machine learning models trained specifically on historical documents. Unlike modern text, historical records present unique challenges: varying handwriting styles, degraded paper quality, obsolete terminology, and inconsistent formatting across different time periods and government agencies. The AI systems must be capable of understanding context, recognizing patterns in 18th-century script as readily as 20th-century typewritten memos, and distinguishing between similar names or terms that might appear across decades of records.

The institution has partnered with technology vendors specializing in heritage digitization and archival processing. These partnerships bring together expertise in both cutting-edge AI development and the specialized requirements of historical preservation. The systems are designed to flag uncertain readings for human review, ensuring that the pursuit of efficiency doesn’t compromise accuracy—a critical consideration when dealing with primary source materials that form the foundation of historical scholarship.

Transforming Research Methodologies and Historical Scholarship

The impact on historical research methodologies promises to be revolutionary. Scholars who previously spent months or years manually searching through document collections can now conduct comprehensive searches across millions of pages in minutes. This capability enables entirely new forms of historical analysis, including large-scale pattern recognition, social network mapping across historical figures, and the identification of previously unknown connections between events and individuals.

The AI tools are particularly valuable for uncovering marginalized voices and overlooked narratives in American history. Documents relating to women, minorities, and working-class individuals often exist in the Archives but have been difficult to locate systematically. By making the entire collection searchable, the AI system allows researchers to identify and study these previously obscured historical actors and their contributions to American society. This technological capability aligns with broader movements in the historical profession toward more inclusive and representative accounts of the past.

Privacy, Accuracy, and Ethical Considerations

The deployment of AI in the National Archives raises important questions about privacy, particularly regarding more recent records. While most archival materials are historical enough to avoid contemporary privacy concerns, the Archives holds records extending into the late 20th and early 21st centuries. The institution must balance the public’s right to access government records with individuals’ privacy rights, a challenge complicated by AI’s ability to rapidly cross-reference and connect disparate pieces of information.

Accuracy concerns represent another critical consideration. AI systems, despite their sophistication, are not infallible. Misread characters, misinterpreted context, or algorithmic biases could lead to incorrect transcriptions or misleading search results. The National Archives has implemented quality control measures, including human oversight of AI-generated transcriptions and the preservation of original document images alongside digital text. Researchers are encouraged to verify AI-generated transcriptions against original sources, maintaining the scholarly rigor essential to historical work.

Budgetary Realities and Institutional Challenges

The National Archives faces significant budgetary constraints that make the AI initiative both necessary and challenging. Traditional cataloging methods require substantial human resources—archivists, historians, and subject matter experts—whose salaries and benefits represent ongoing costs. AI offers a potential solution by automating much of the initial processing work, allowing human experts to focus on complex interpretive tasks and quality assurance rather than routine data entry.

However, the initial investment in AI infrastructure is substantial. The institution must acquire or develop appropriate software, train staff to use and maintain these systems, and ensure adequate computing resources to process billions of documents. These upfront costs occur against a backdrop of federal budget pressures and competing priorities for limited resources. The Archives must continually justify these expenditures to congressional appropriators and demonstrate tangible returns on investment in terms of increased public access and research productivity.

Public Engagement and Educational Applications

Beyond serving academic researchers, the AI-enhanced Archives offers new possibilities for public engagement with American history. Genealogists, one of the largest user groups for archival records, will benefit enormously from improved searchability. Family historians can more easily trace ancestors through census records, military service documents, immigration files, and other personal records scattered across the Archives’ collections.

Educational applications represent another significant opportunity. Teachers can more readily locate primary source documents relevant to curriculum topics, bringing authentic historical materials into classrooms. Students can conduct original research using the same tools available to professional historians, fostering critical thinking skills and historical literacy. The Archives is developing educational resources and lesson plans that leverage the AI-enhanced search capabilities to support K-12 and college-level instruction.

International Implications and Collaborative Opportunities

The National Archives’ AI initiative is being closely watched by archival institutions worldwide. Many countries face similar challenges with vast collections of historical records requiring cataloging and digitization. The technical approaches, best practices, and lessons learned from the American experience could inform similar projects internationally, potentially leading to collaborative efforts and shared technological infrastructure.

International collaboration could extend to standardizing metadata schemas, sharing AI training datasets for historical documents, and developing interoperable search systems that allow researchers to query multiple national archives simultaneously. Such cooperation would facilitate transnational historical research, enabling scholars to trace individuals, events, and movements across borders more effectively than ever before.

The Future of Archival Practice

The integration of AI into the National Archives represents more than a technological upgrade—it signals a fundamental reimagining of what archival institutions can and should be in the digital age. Rather than passive repositories where researchers must physically visit to access materials, archives are becoming active platforms for discovery, analysis, and public engagement. AI serves as the enabling technology for this transformation, but the vision extends to creating a more accessible, inclusive, and useful historical record.

Looking ahead, the Archives envisions AI applications beyond text recognition and search. Machine learning could help identify and restore damaged documents, predict which records are most at risk of deterioration, and even generate contextual information to help users understand historical documents. Computer vision algorithms might analyze photographs and artwork, identifying individuals, locations, and objects. Natural language processing could summarize lengthy documents or identify thematic connections across disparate records.

The success of this initiative will ultimately be measured not in technological metrics but in human terms: the dissertations written, the family histories completed, the policy insights gained, and the public understanding deepened through improved access to America’s documentary heritage. As the National Archives continues to refine and expand its AI capabilities, it is writing a new chapter in the long story of preserving and sharing the records that define the American experience. The technology may be cutting-edge, but the mission remains timeless: ensuring that the documentary evidence of our collective past remains available to inform our present and guide our future.

Leave a Reply

Your email address will not be published.